Sony’s new CLEWS tech traces AI-generated music back to their human sources, and streaming platforms face...

Key Highlights:

- Sony’s CLEWS technology detects “musical DNA” in AI-generated tracks, moving beyond exact audio matching to identify melodic and structural similarities

- The system reverse-engineers AI outputs to determine which copyrighted songs influenced their creation, enabling pro-rated royalty payments

- Streaming platforms face pressure to integrate the tech as a defense against 20,000+ daily AI track uploads flooding their catalogs

The Music Industry’s Content ID Moment Arrives

Sony Group has deployed technology that traces the creative lineage of AI-generated music back to its human sources. This marks the industry’s pivot from blocking generative AI to building infrastructure that taxes it.

The technology, called CLEWS (Contrastive Learning from Weakly-Labeled Segments), analyzes 20-second audio segments to identify semantic similarities. Unlike Shazam’s exact-match fingerprinting, CLEWS detects when two pieces “feel like versions of each other” even when they sound different.

This follows Sony Music’s 2024 letters to 700 AI companies prohibiting text and data mining. The legal warning came first. Now comes the enforcement mechanism.

Sony Solves the AI Attribution Black Box it says:

The takeaway: Sony’s tech connects AI training sets to rights holders, making a licensing market possible.

According to Sony AI’s research, CLEWS uses “training data attribution via unlearning” to reverse-engineer which songs most influenced an AI-generated track. This solves the attribution problem AI companies claimed was impossible.

“We are moving from a binary world of ‘infringing or not infringing’ to a nuanced ledger of attribution,” said Dennis Kooker, President of Global Digital Business at Sony Music. “If an AI model relies on 10% of a Beyoncé track to generate a new hit, the original creators deserve to be recognized and compensated.”

The deployment integrates into Sony’s SoundPatrol partnership with Universal Music Group. Industry sources suggest this creates the foundation for AI platforms to pay pro-rated royalties based on a copyrighted track’s “weight” of influence.

Detection Tech Raises Style Copyright Concerns

The AI flood driving this technology is staggering. Platforms receive over 30,000 AI-generated tracks daily, most generating zero streams while diluting royalty pools. Streaming fraud operations use AI-generated noise to siphon payments from human artists.

But “semantic fingerprinting” creates new risks. Legal experts warn the technology blurs the line between protecting compositions and copyrighting musical styles. A producer using common chord progressions or splice-pack loops could face algorithmic accusations of infringement.

Security researchers have demonstrated that adversarial audio attacks fool neural detection systems with imperceptible noise. This will spawn a cat-and-mouse game where AI generators learn to cloak outputs.

Independent artists face familiar problems. Majors have resources to whitelist catalogs and dispute false positives. Indies lack easy appeal mechanisms, echoing early YouTube Content ID struggles. The identity versus copyright battle extends beyond melody to artist likeness.

Producers Must Archive Session Files Now

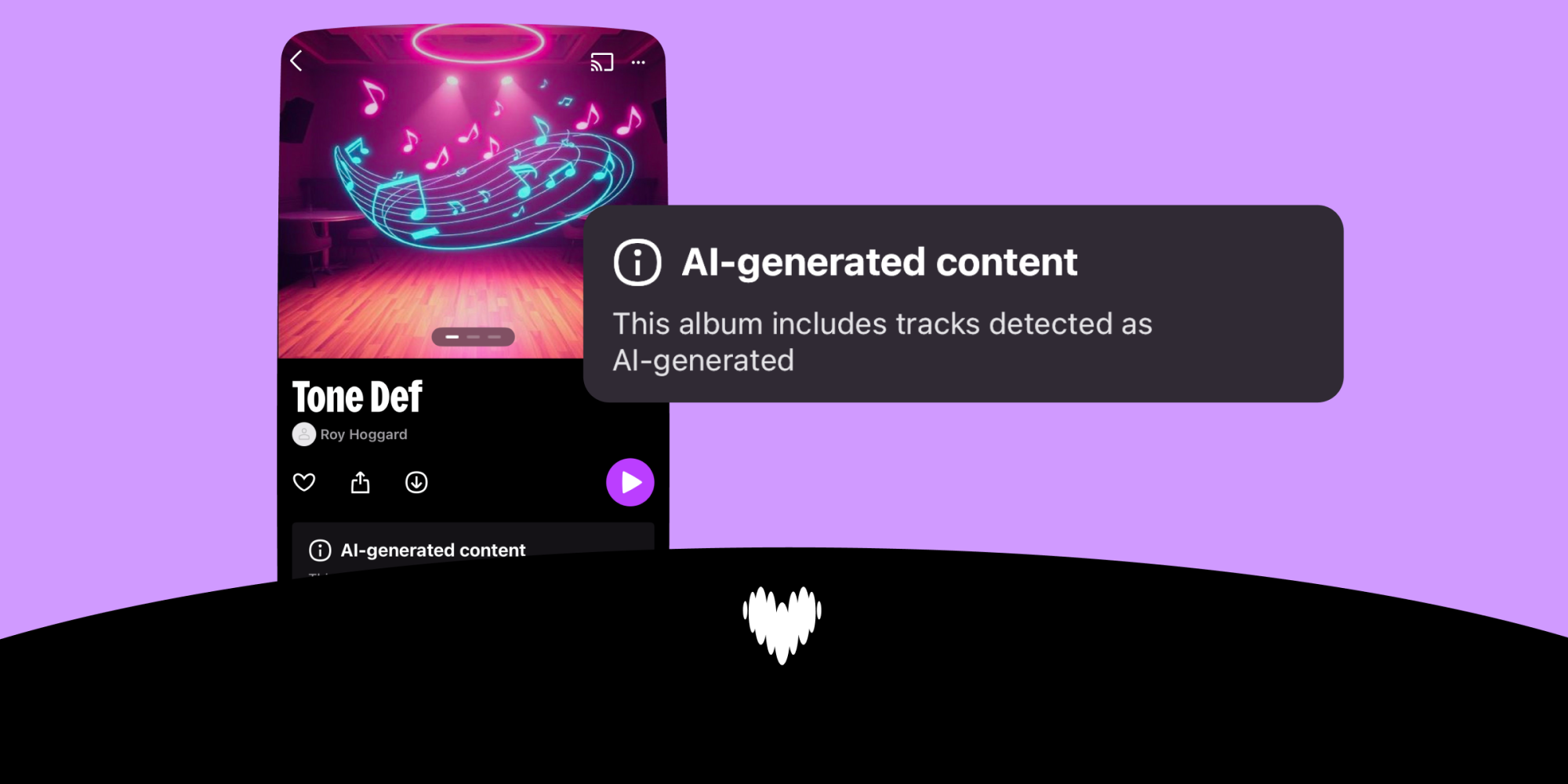

Expect streaming services to announce “Clean” versus “Unverified” content tiers within months. The CLEAR Act adds legislative pressure alongside Sony’s technical enforcement. Startups like AIxChange are building parallel attribution and revenue systems.

Your DAW project file becomes your alibi in this new landscape. Archive stems, MIDI notes, raw vocal takes, and collaborator communications. If an algorithm flags your track as AI-generated or semantically infringing, granular proof of human creation is your only defense.

Consider ethical AI licensing tools that pre-clear outputs. Embed Content Credentials metadata at creation to digitally sign your work as human-made before it reaches the internet. Provenance is the new copyright.”