Key Highlights:

- Diplo posted an AI-generated video falsely showing himself as a bush dancer during Bad Bunny’s Super Bowl halftime performance

- Visual inconsistencies exposed the fake, including a stadium roof that doesn’t exist at Levi’s Stadium

- The stunt cost pennies compared to the $10-15 million production it hijacked for attention

Diplo’s AI Stunt Hijacked a $15 Million Show

A DJ with a $20 software subscription generated more buzz than dancers paid $18.70 an hour to wear 50-pound costumes for eight days of rehearsals.

Days after Bad Bunny’s historic Super Bowl LX halftime performance, Diplo posted a video appearing to show himself inside one of the show’s famous bush costumes. The clip went viral. Fans flooded comments with “industry plant” jokes.

Then eagle-eyed viewers noticed the stadium in Diplo’s video had a roof. Levi’s Stadium in Santa Clara does not.

This wasn’t a cameo. It was a prompt.

Billboard Spotted the Costume Inconsistencies

The original reporting from NME confirmed multiple visual errors in Diplo’s clip.

As Billboard pointed out, the costume Diplo wore didn’t match the taller, spikier designs from the actual performance. His video also lacked the elaborate staging elements that defined Bad Bunny’s set.

The real show featured confirmed celebrity appearances from Pedro Pascal, Cardi B, Jessica Alba, and Karol G among the dancers. Performers earned union scale wages for their physically demanding work. Diplo earned millions of impressions for zero physical labor.

From Mimicking Sound to Faking Access

Friendly Disclaimer: This is an AI-generated image

Friendly Disclaimer: This is an AI-generated image

The takeaway: We’ve moved from AI copying artistic style to AI fabricating physical presence at real events.

The 2023 “Heart on My Sleeve” track proved AI could mimic an artist’s voice and style. Diplo’s bush video proves AI can now mimic access itself. The technology that once required Forrest Gump’s million-dollar budget now runs on a smartphone.

This creates what researchers call “Digital Stolen Valor.” Artists will soon claim studio sessions they missed, collaborations with legends they never met, or festival appearances they weren’t booked for. The “pics or it didn’t happen” era is dead.

Bad Bunny invested months crafting a performance centered on Puerto Rican identity. Diplo turned that cultural statement into a meme template with a few minutes of rendering time. The ecosystem rewards the speed of the lie more than the accuracy of the truth.

Green Day recently called out Will Smith for allegedly using AI to fake enthusiastic concert crowds. The pattern is emerging.

Audit Your Digital Likeness Rights Now

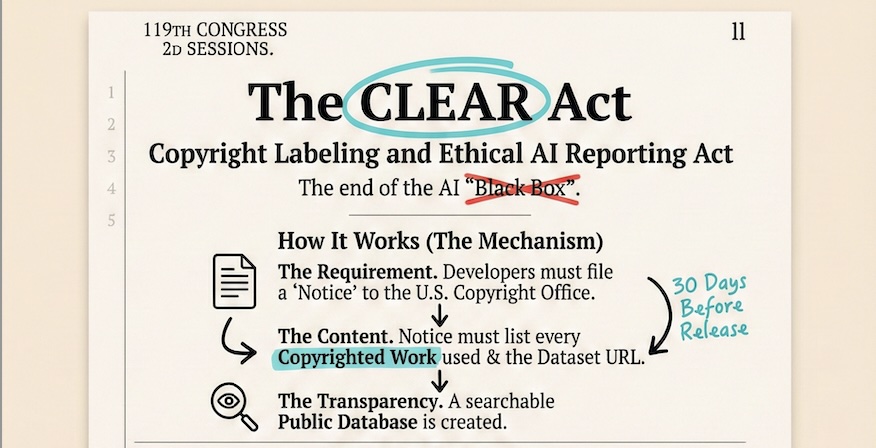

CLEAR Act Infographic

CLEAR Act Infographic

Legislation is catching up. Tennessee’s ELVIS Act now explicitly targets voice and likeness misuse by AI. The federal NO FAKES Act is gaining traction. The CLEAR Act introduced in February 2026 (see image above) would force AI companies to reveal training data.

Your contracts need updating. Ensure they explicitly address who can generate your image. If you’re a promoter, update media accreditation policies. Technical solutions like C2PA content credentials will soon become standard for verifying authentic footage.

Understanding how AI video tools actually work helps you spot fakes. Protecting your likeness is no longer optional.

What’s a prank today becomes a lawsuit tomorrow.