Key highlights

- Soderstrom says Spotify won’t act as “the arbiter of which tools artists are allowed to use,” comparing AI to electric guitars and synthesizers

- Spotify’s technology for fan-made AI derivatives is ready. The only barrier is a licensing framework Spotify would control

- Spotify has never disclosed what data its AI systems were trained on, despite holding one of the world’s largest audio and behavioral datasets

Spotify’s new co-CEO arrives at SXSW

Gustav Soderstrom became Spotify co-CEO alongside Alex Norstrom in January 2026, replacing founder Daniel Ek as executive chairman. His first major public appearance was a featured session at SXSW on March 13.

He arrived with a new feature: Taste Profile, a beta personalization tool letting listeners customize their own algorithm. Spotify says 80% of its users rank personalization as the platform’s most valued feature.

On Spotify’s Q4 2025 earnings call, Soderstrom described a growing catalog as “always very good for us” when asked about AI-generated music. At SXSW, he clarified where the platform stands on AI tools and what it plans to build next.

Soderstrom declined to say what percentage of Spotify’s catalog is AI-generated. His position: “Spotify should not act as the arbiter of which tools artists are allowed to use.” He placed AI alongside the electric guitar, synthesizer, and DAW as tools once considered controversial and now standard.

He outlined two AI music categories. First, original AI-generated music already on the platform. Second, AI derivatives: fan-made remixes, covers, and reinterpretations of existing songs.

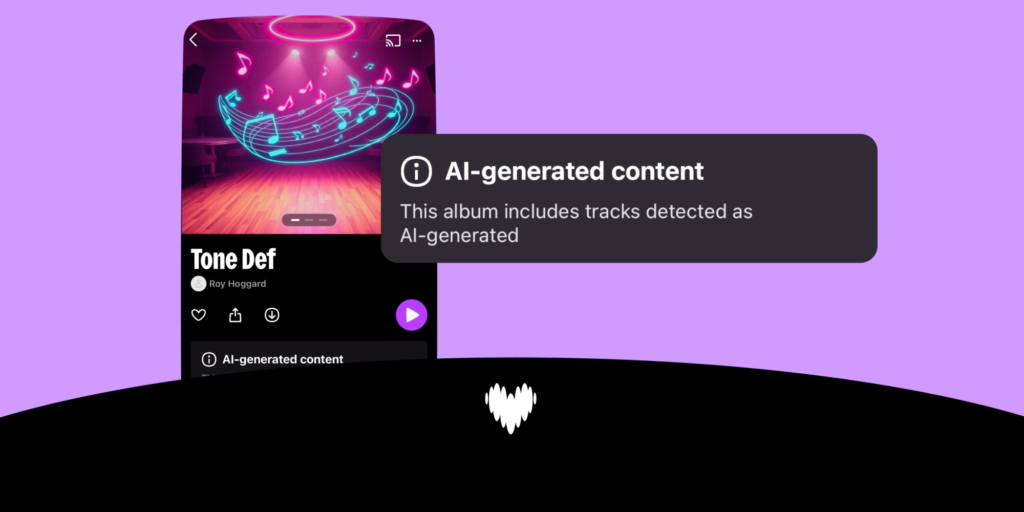

On the Q4 call, he called this “an untapped opportunity for artists to make money off of their existing IP” and confirmed the technology is ready. The only hold-up is an industry licensing framework. Spotify currently asks artists to disclose AI use through DDEX metadata fields before uploading. No equivalent requirement applies to Spotify itself.

The training data question nobody pressed

The takeaway: Spotify requires artists to declare their AI use through DDEX fields but has never declared what its own AI was trained on.

Music technology writer Chris Castle published a detailed analysis of Spotify’s training data in February 2026, calling it “the sinister question Spotify has not answered.” The platform holds recordings, stems, listener behavioral data, and performance analytics. The dataset was licensed for streaming, not for training AI systems. No creator has any way to verify whether their work already trained the derivative tools Spotify is about to offer them.

The same month, Spotify cited AI risks as the reason for restricting developer API access, blocking third parties from accessing the same artist data the platform holds internally. External AI risk is a problem to manage. Internal AI use requires no disclosure.

Deezer publicly flags 60,000 AI-generated tracks daily and publishes its detection methods. Spotify enacted its own AI crackdown on the royalty pool, removing millions of tracks. Transparency applies outward. It stops at the company’s front door.

The music publishers who sued Anthropic for $3 billion are making the same argument: undisclosed AI training data is a legal liability, not an ethical abstraction. Spotify has not addressed this exposure in public.

Before Spotify’s next pitch arrives

The derivatives pitch is coming. Spotify’s position is already set: let fans remix your catalog on the world’s leading music platform, earn royalties through a controlled system.

The TRAIN Act, backed by all three major labels and most major songwriter organizations, would require AI companies to disclose whether your copyrighted work was used in training data, upon request. Until it passes, or Spotify discloses voluntarily, you have no way to know whether your catalog already trained what the platform is about to sell back to you.

UK AI training legislation is on the same timeline. Regulatory pressure is real. Before you opt in to any Spotify AI feature, ask one question: what did you train this on?

Frequently asked questions

What did Spotify co-CEO Gustav Soderstrom say about AI music at SXSW 2026?

Soderstrom said Spotify should not police which AI tools artists use, comparing AI to electric guitars and synthesizers. He outlined two AI strategies: original AI-generated music and fan-made derivatives such as remixes and covers, with the technology confirmed as ready pending a licensing framework.

What is Spotify’s AI derivatives plan?

Spotify plans to let fans create AI-generated remixes and covers of existing songs, with the platform acting as the licensing hub. Soderstrom described it on the Q4 2025 earnings call as “an untapped opportunity for artists to make money off of their existing IP,” with royalties flowing through Spotify’s system.

Has Spotify disclosed what its AI was trained on?

No. As of March 2026, Spotify has not publicly disclosed what data its AI systems were trained on. Music Tech Policy writer Chris Castle raised this question in February 2026, noting Spotify’s audio and behavioral datasets were licensed for streaming, not AI training.

What is the TRAIN Act and how does it affect Spotify?

The Transparency and Responsibility for Artificial Intelligence Networks Act, introduced by Senator Peter Welch, would require AI companies to disclose whether copyrighted works were used in training data, upon a rightsholder’s request. It is backed by all three major labels, the RIAA, and major songwriter organizations.

What does Soderstrom’s per-stream regret mean for artists?

Soderstrom said his biggest regret is that Spotify “let the per-stream discussion take root.” He is regretting the discussion becoming a public narrative, not the $0.003 per-stream rate itself. The rate has not changed. The framing has.